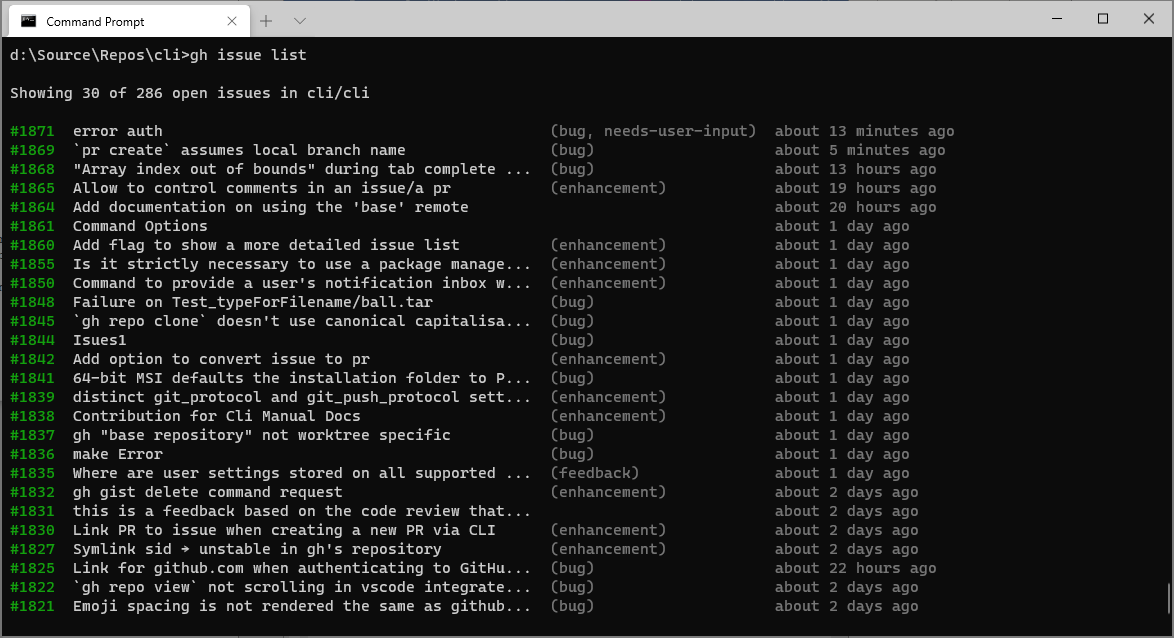

When Pipeline A runs and executes the az command, it prints the echoed secret from the Azure CLI response - but masked. Let’s assume there's a resource definition in a pipeline called Pipeline A that consumes a secret called “ MY_SECRET”. These incidents happened where developers set up separate pipelines for create and delete actions (or equivalent). Incidents where folks who almost escaped without mishap but didn’t make it in the end were unfortunate to witness and yet fun to find. Kudos! Pattern 3: The Folks Who Almost Had It Right This usage pattern yielded zero credentials. In these workflows, the developers either manually masked the entirety of the returned values or stored the responses in variables rather than letting them echo to the log. Some developers knew, or assumed, that the Azure CLI would leak sensitive data. So no bell today.įigure 6: Workflow logs with masking and credentials leakage Pattern 2: Folks Who Had It Right For the remaining cases, I encountered partial or insufficient maskings that still left secrets and sensitive data exposed. In the majority of the “saved by the bell” cases, I wasn’t able to find full raw credentials. Whether they knew about the nature of the output of the tool, I can’t tell. GitHub Actions later masked, or partially masked, the output of the tool, protecting the tool users. This is when the developers defined the about-to-be-echoed credentials as secrets in the workflow - but mainly for the input phase. In some implementations, though, I saw developers getting “saved by the bell”. This implies, then, that their logs contain raw sensitive information. The developers weren’t aware that the tool is spewing their credentials, so they didn’t put any mitigations in place. Use cases among people who didn’t anticipate the issue are especially problematic and an easy target for attackers. Where some developers didn’t know about the tool’s tendency to emit sensitive data, others did know (or at-least played it safe) and proactively mitigate the problem.Ĭlassifying the usages, I found three main variations of usage patterns when using Azure CLI in GitHub actions. When I looked at the Azure CLI usages, I noted that even for cases where the tool was “supposed” to leak credentials, the developers’ use differentiated between a full leak or the mitigation of it. To do so, I cloned the Azure CLI repo and looked through the various modules ( = about 64 options for different CMDs when running az CMD, e.g., “ az webapp ”), searching for existing leaks in CI logs using command variants.įigure 5: az commands list Observing Usage Patterns of the Azure CLI in the Wild, Wild GitHub Actions Moving on, I wanted to find more variants and occurrences. But given one compromised account/token with the lowest “READ” permissions - suddenly an actor can access raw production credentials and possibly escalate their privileges. So while the Azure CLI doesn’t perform anything buggy, when executed in a pipeline with the echoed credentials stored in the pipeline’s log, we suddenly find ourselves in a “who should be able to read the logs” kind of problem.įor public repositories and pipelines, this problem is easy to see and understand - random internet stalkers ( comme moi!) shouldn’t access your production database keys.įor private repositories you may get a false sense of security due to the “private” title. What’s actually problematic is the combination of where this tool is running and who can access the run logs. The Azure CLI actually echoes back this information as intended, so there’s nothing buggy regarding the tool or its output. - īoth of these issues showed environment variables echoing back to the log.In fact, many az functions (which are being run using the Azure CLI) echo back the accessed/created/updated/deleted resource alongside their environment variables, secrets, etc.ĭown the line, I also found the following issues: The findings' severities ranged from informative to critical. I reported the findings to the relevant vulnerability disclosure programs, and all were all accepted and fixed.

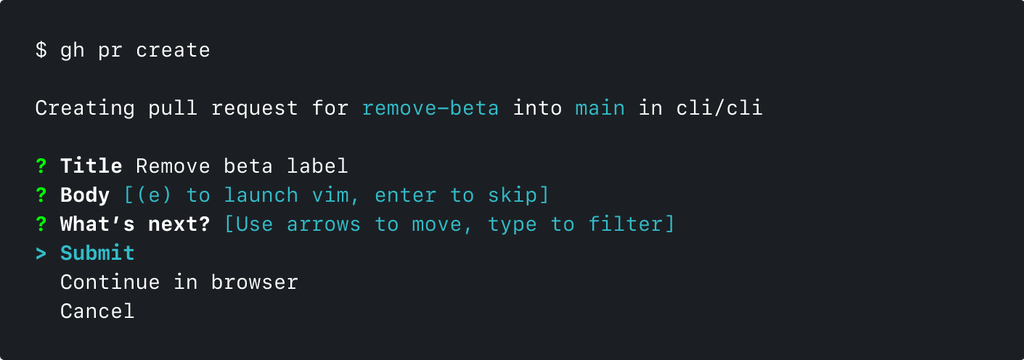

Throughout the research, I was able to disclose more findings to some other groups that I can’t disclose, per their requests. The initial lookup yielded five vulnerability reports, four to Microsoft and one to GitHub. Makes an authenticated HTTP request to the GitHub API and prints the response.Seeing that the issue is indeed true, I did an initial lookup while also trying to see if I can find other commands.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed